The world of Artificial Intelligence (AI) is like a complex maze, and the scientists who work in this field often use specialized terms (or “jargon”) to explain their work. This can make it difficult for us, the non-specialists, to understand articles about AI. So, we’ve put together a glossary to explain some of the most important terms you’ll often see in AI articles.

We’ll regularly update this list with new concepts, as researchers are constantly discovering new ways to push the boundaries of AI, while also identifying emerging safety risks.

- AGI (Artificial General Intelligence)

- AI Agent

- Chain-of-Thought

- Deep Learning

- Diffusion

- Distillation

- Fine-tuning

- GAN (Generative Adversarial Network)

- Hallucination

- Inference

- Large Language Model (LLM)

- Neural Network

- Training

- Transfer Learning

- Weights

AGI (Artificial General Intelligence)

AGI stands for Artificial General Intelligence. It’s a somewhat vague concept, but generally, it refers to AI that’s more capable than the average human at many, if not most, tasks. For example, Sam Altman, CEO of OpenAI, describes AGI as “the equivalent of a median human that you could hire as a co-worker.”

Essentially, AGI is an AI system that can perform a wide range of tasks, not just specialize in one area like current AIs. Even leading experts in AI research are trying to precisely define AGI, so don’t worry if you find it a bit confusing!

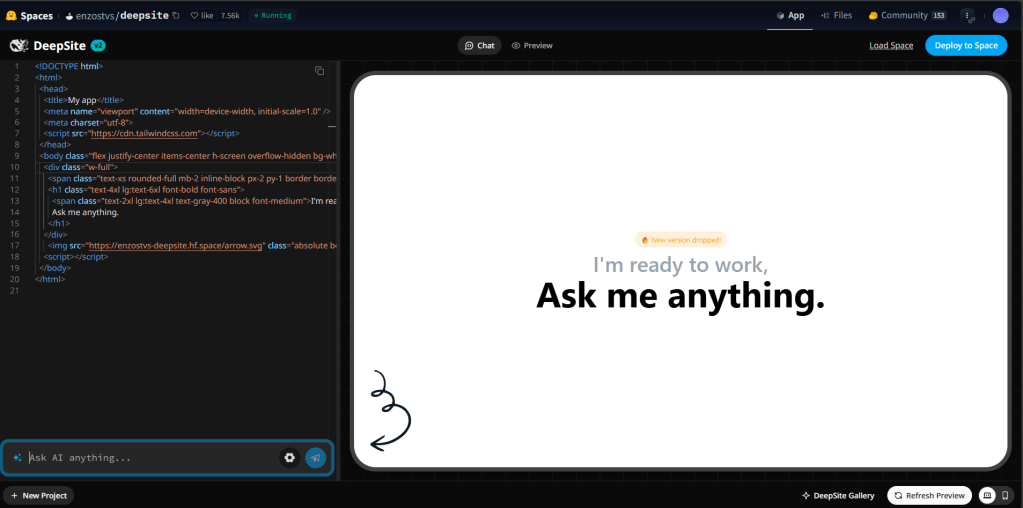

AI Agent

An AI agent is a tool that uses AI technologies to perform a series of tasks on your behalf, going beyond what a basic AI chatbot could do. For example, it could automatically file expenses, book flight tickets or a table at a restaurant, or even write and maintain code.

Currently, “AI agent” can have different meanings to different people, as the field is still evolving. However, the basic idea is an autonomous system that can combine multiple AI systems to carry out multi-step tasks.

Chain-of-Thought

Think about how we solve a problem. With a simple question like “Which animal is taller, a giraffe or a cat?”, you can answer immediately. But for more complex problems, you might need a pen and paper to find the right answer because there are intermediate steps. For instance, if a farmer has chickens and cows, and together they have 40 heads and 120 legs, you might need to write a simple equation to find the answer (20 chickens and 20 cows).

In an AI context, chain-of-thought reasoning for large language models (LLMs) means breaking down a big problem into smaller, intermediate steps to improve the quality of the final result. This process usually takes longer to get an answer, but the answer is more likely to be correct, especially in logic or coding problems. Chain-of-thought models are developed from traditional large language models and are optimized for this type of thinking thanks to reinforcement learning.

Deep Learning

Deep learning is a subset of self-improving machine learning. In deep learning, AI algorithms are designed with a multi-layered, artificial neural network (ANN) structure. This allows them to make more complex connections compared to simpler machine learning systems (e.g., linear models). The structure of deep learning algorithms is inspired by the interconnected pathways of neurons in the human brain.

Deep learning AI models can identify important characteristics in data themselves, rather than requiring human engineers to define these features. This structure also supports algorithms that can learn from errors and, through repetition and adjustment, improve their own outputs. However, deep learning systems require a lot of data (millions or more) to yield good results. They also typically take longer to train compared to simpler machine learning algorithms, so development costs tend to be higher.

Diffusion

Diffusion is the core technology behind many AI models that generate art, music, and text. Inspired by physics, diffusion systems slowly “destroy” the structure of data (e.g., photos, songs, etc.) by adding noise until nothing is left. In physics, diffusion is spontaneous and irreversible (sugar dissolved in coffee cannot be restored to cube form). But diffusion systems in AI learn a “reverse diffusion” process to restore the destroyed data, thereby gaining the ability to recover data from noise.

Distillation

Distillation is a technique used to extract knowledge from a large AI model using a “teacher-student” model. Developers send requests to a teacher model and record the outputs. Answers are sometimes compared with a dataset to see how accurate they are. These outputs are then used to train the student model, which is trained to approximate the teacher’s behavior.

Distillation can be used to create a smaller, more efficient model based on a larger model with minimal “distillation loss.” This is likely how OpenAI developed GPT-4 Turbo, a faster version of GPT-4.

While all AI companies use distillation internally, it may also have been used by some AI companies to catch up with cutting-edge models. Distillation from a competitor usually violates the terms of service of AI APIs and chat assistants.

Fine-tuning

Fine-tuning refers to further training an AI model to optimize its performance for a more specific task or area than was previously the focus of its training. This is typically done by feeding in new, specialized (i.e., task-oriented) data.

Many AI startups are taking large language models as a starting point to build a commercial product, but they are aiming to boost utility for a target sector or task by supplementing earlier training cycles with fine-tuning based on their own domain-specific knowledge and expertise.

GAN (Generative Adversarial Network)

A GAN, or Generative Adversarial Network, is a type of machine learning framework that underpins some important developments in generative AI when it comes to producing realistic data – including (but not only) “deepfake” tools.

A GAN involves two neural networks: a generator and a discriminator. The generator uses its training data to produce an output, which is then passed to the discriminator to evaluate. The discriminator’s job is to classify whether the output is real or fake. Both networks compete with each other: the generator tries to create data convincing enough to fool the discriminator, while the discriminator works to spot artificially generated data. This structured competition optimizes AI outputs to be more realistic without the need for additional human intervention. However, GANs work best for narrower applications (such as producing realistic photos or videos), rather than general-purpose AI.

Hallucination

Hallucination is the AI industry’s preferred term for AI models making things up – literally generating incorrect information. Obviously, it’s a huge problem for AI quality.

“Hallucinations” produce generative AI outputs that can be misleading and could even lead to real-life risks, with potentially dangerous consequences (think of a health query that returns harmful medical advice). This is why most generative AI tools’ fine print now warns users to verify AI-generated answers, even though such disclaimers are usually far less prominent than the information the tools dispense at the touch of a button.

The problem of AIs fabricating information is thought to arise as a consequence of gaps in training data. For general-purpose generative AI, especially, also sometimes known as foundation models, this looks difficult to resolve. There is simply not enough data in existence to train AI models to comprehensively resolve all the questions we could possibly ask. In short: we haven’t invented God (yet).

“Hallucinations” are contributing to a push towards increasingly specialized and/or vertical AI models (i.e., domain-specific AIs that require narrower expertise) as a way to reduce the likelihood of knowledge gaps and shrink disinformation risks.

Inference

Inference is the process of running an AI model. It’s setting a model loose to make predictions or draw conclusions from previously-seen data. To be clear, inference cannot happen without training; a model must learn patterns in a set of data before it can effectively extrapolate from this training data.

Many types of hardware can perform inference, ranging from smartphone processors to beefy GPUs to custom-designed AI accelerators. But not all of them can run models equally well. Very large models would take ages to make predictions on, say, a laptop versus a cloud server with high-end AI chips.

Large Language Model (LLM)

Large language models, or LLMs, are the AI models used by popular AI assistants, such as ChatGPT, Claude, Google’s Gemini, Meta’s AI Llama, Microsoft Copilot, or Mistral’s Le Chat. When you chat with an AI assistant, you interact with a large language model that processes your request directly or with the help of different available tools, such as web Browse or code interpreters.

AI assistants and LLMs can have different names. For instance, GPT is OpenAI’s large language model and ChatGPT is the AI assistant product.

LLMs are deep neural networks made of billions of numerical parameters (or weights, see below) that learn the relationships between words and phrases and create a representation of language, a sort of multidimensional map of words.

These models are created from encoding the patterns they find in billions of books, articles, and transcripts. When you prompt an LLM, the model generates the most likely pattern that fits the prompt. It then evaluates the most probable next word after the last one based on what was said before. Repeat, repeat, and repeat.

Neural Network

A neural network refers to the multi-layered algorithmic structure that underpins deep learning – and, more broadly, the whole boom in generative AI tools following the emergence of large language models.

Although the idea of taking inspiration from the densely interconnected pathways of the human brain as a design structure for data processing algorithms dates all the way back to the 1940s, it was the much more recent rise of graphical processing hardware (GPUs) – via the video game industry – that really unlocked the power of this theory. These chips proved well suited to training algorithms with many more layers than was possible in earlier epochs – enabling neural network-based AI systems to achieve far better performance across many domains, including voice recognition, autonomous navigation, and drug discovery.

Training

Training is the process of developing machine learning AIs. In simple terms, this refers to data being fed in so that the model can learn from patterns and generate useful outputs.

Things can get a bit philosophical at this point in the AI stack – since, pre-training, the mathematical structure that’s used as the starting point for developing a learning system is just a bunch of layers and random numbers. It’s only through training that the AI model really takes shape. Essentially, it’s the process of the system responding to characteristics in the data that enables it to adapt outputs towards a sought-for goal – whether that’s identifying images of cats or producing a haiku on demand.

It’s important to note that not all AI requires training. Rule-based AIs that are programmed to follow manually predefined instructions – for example, such as linear chatbots – don’t need to undergo training. However, such AI systems are likely to be more constrained than (well-trained) self-learning systems.

Still, training can be expensive because it requires a lot of inputs – and, typically, the volumes of inputs required for such models have been trending upwards.

Hybrid approaches can sometimes be used to shortcut model development and help manage costs. Such as doing data-driven fine-tuning of a rule-based AI – meaning development requires less data, compute, energy, and algorithmic complexity than if the developer had started building from scratch.

Transfer Learning

Transfer learning is a technique where a previously trained AI model is used as the starting point for developing a new model for a different but typically related task – allowing knowledge gained in previous training cycles to be reapplied.

Transfer learning can drive efficiency savings by shortcutting model development. It can also be useful when data for the task the model is being developed for is somewhat limited. But it’s important to note that the approach has limitations. Models that rely on transfer learning to gain generalized capabilities will likely require training on additional data in order to perform well in their domain of focus.

Weights

Weights are central to AI training, as they determine how much importance (or weight) is given to different features (or input variables) in the data used for training the system – thereby shaping the AI model’s output.

Put another way, weights are numerical parameters that define what’s most salient in a dataset for the given training task. They achieve their function by applying multiplication to inputs. Model training typically begins with weights that are randomly assigned, but as the process unfolds, the weights adjust as the model seeks to arrive at an output that more closely matches the target.

For example, an AI model for predicting housing prices that’s trained on historical real estate data for a target location could include weights for features such as the number of bedrooms and bathrooms, whether a property is detached or semi-detached, whether it has parking, a garage, and so on.

Ultimately, the weights the model attaches to each of these inputs reflect how much they influence the value of a property, based on the given dataset.

Leave a comment